Working towards a unified framework for joint audio &visual analytics

Background

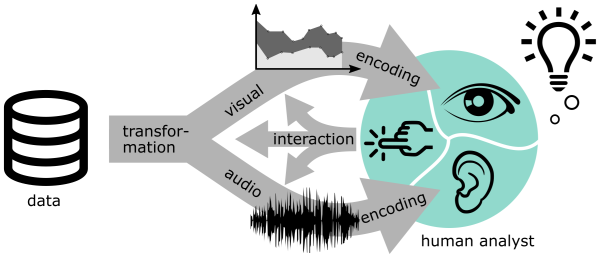

Data analysis, such as the analysis of production processes, includes a number of activities that cannot be automated completely. Therefore, the work of human analysts is often required. Both information visualization and sonification – the representation of data with images or sounds – are considered effective methods to involve humans in data analysis. These two fields utilize the highly sophisticated visual and auditory information processing capabilities of humans. While information visualization approaches explore computer-based, interactive visual representations of data, sonification illustrates information through the use of nonspeech audio. With the increasing amount and complexity of data, both approaches have reached their limits, which is also the case for the human perceptual capacities serving as the basis for interpreting images and sounds.

Although extensive research has been carried out on auditory and visual representation of data, there have been few attempts to combine these two channels in a systematic and complementary manner. So far, not enough attention has been paid to the interrelation of these modalities. Existing research on combinations has often focused on one modality while neglecting the other. A methodical approach to combine information visualization and sonification with regard to a complementary framework is still missing. Due to the rapidly increasing amounts of complex data to be analyzed, each individual approach reaches its limits. For this reason, more capable methods are urgently needed.

Project content and aims

SoniVis aims to bridge the gap between sonification and visualization. To do this, we are working on creating a basis for a unified design theory for audio-visual analytics that combines these two modalities in a complementary way for exploratory data analysis. Within the framework of this project, we

- will outline the range of design options for complementary audio-visual data analysis approaches,

- examine the practical feasibility in the form of a design study based on the analysis of production processes, and

- conduct a controlled experiment to empirically compare different complementary audio-visual analytics approaches.

Method & Results

The project follows a human-centred and problem-driven research process. It is based on the paradigm of design science research and closely intertwines design and evaluation phases. Therefore, approaches can be reviewed promptly, mitigating the risk of non-informative results.

Expected research outputs are primarily design principles (core principles and concepts to guide design) and instantiations (digital artifacts, i.e. situated implementations in certain environments). Based on this, the researchers work towards a design theory for integrating interactive visualization and sonification for data analysis tasks.

Publications

Press Coverage

Neuer Artikel über audiovisuelle Datenanalyse publiziert

04/22/2024Medium: MeinBezirk.at

Redakteurin: Stefanie Machtinger

Hören und Sehen miteinander verbinden

06/30/2022Forscher*innen der FH St. Pölten verknüpfen unser Hören und Sehen für die Analyse von Daten

"Sehen und Hören in Einklang bringen"

01/22/2021Medium: Die Presse

Author: Veronika Schmidt